“When to Hand Off, When to Work Together”

Expanding Human-Agent Co-Creative Collaboration through Concurrent Interaction

Paper

Dataset

The dominant paradigm for human-agent collaboration is delegation: describe a goal, the agent executes, you review. But as agent execution becomes observable step-by-step — a new question emerges:

When humans can see an agent working in real time, what do they naturally want to do?

They don’t just watch and wait — they want to jump in.

We call this concurrent interaction: humans and agents working freely within the same workspace, even on overlapping work, at the same time. We built CLEO to support it, and studied 10 creative practitioners across 214 turns of human-agent interaction logs.

Delegation has emerged as the dominant paradigm for creative human-agent collaboration, yet emerging tool calls are making agent execution observable step-by-step, raising an unexplored question: “when humans can see an agent working step-by-step, what do they naturally want to do?” Our Study 1 (N=10 professional designers) revealed that process visibility naturally prompted concurrent interaction — users intervened mid-execution and demonstrated intent through direct manipulation — but exposed conflicts when agents could not distinguish feedback from independent work. Based on these findings, we developed CLEO, which interprets collaborative intent and adapts in real-time by enabling concurrent interaction with the user. With CLEO, our Study 2 (N=10, two-day with stimulated recall interviews) analyzed 214 turns, identifying five action patterns, six triggers, and four enabling factors explaining when designers choose delegation (70.1%), direction (28.5%), or concurrent work (31.8%). We present a decision model, design implications, and an annotated dataset — positioning concurrent interaction as the key to better delegation.

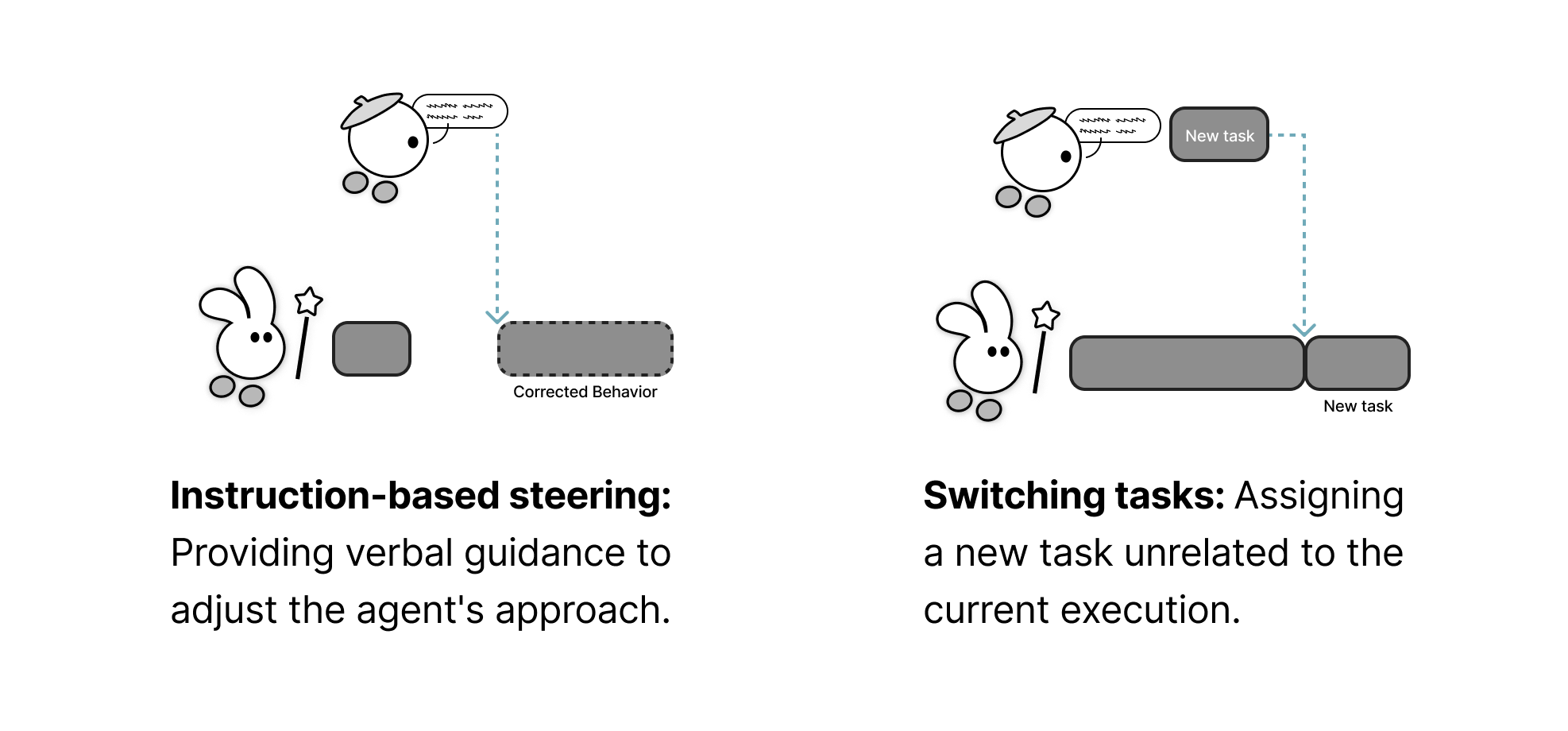

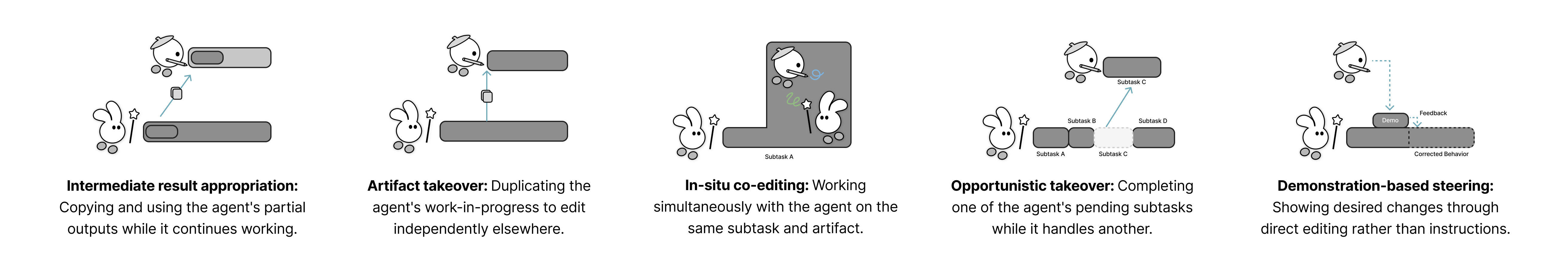

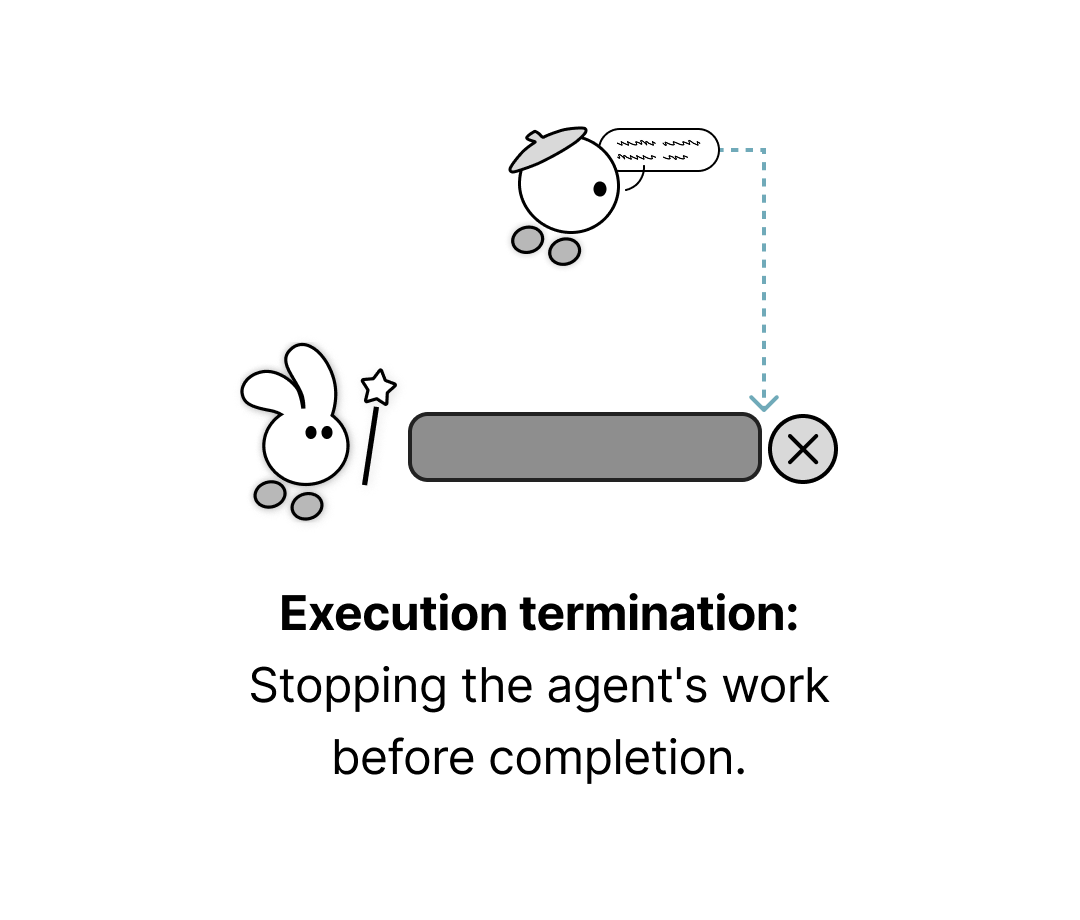

When working alongside CLEO, designers didn’t interact in one fixed way. Through analysis of 214 turns, we identified five distinct action patterns that emerged naturally during agent execution — ranging from full delegation to real-time co-editing.

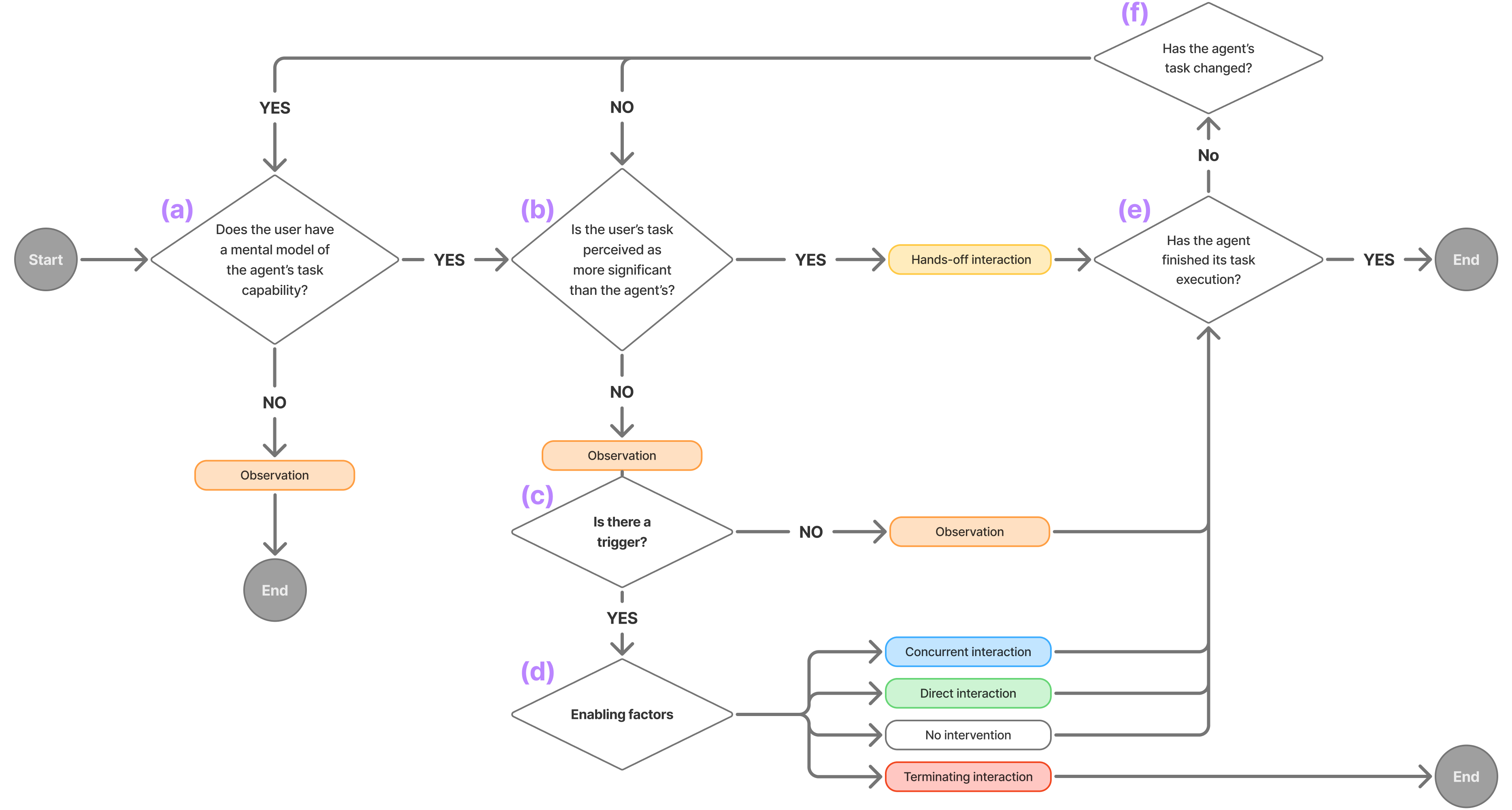

Fully delegating — the user steps away to focus on their own work while the agent executes independently.

Watching the agent's step-by-step execution without intervening — often to build a mental model or find the right moment to act.

Providing verbal guidance mid-execution — either adjusting the agent's approach with new instructions, or redirecting it to a new task entirely.

Directly engaging with the agent's work-in-progress — co-editing the same element, completing a pending subtask, or demonstrating intent through direct manipulation rather than words.

Stopping the agent's execution before completion — handing full control back to the user.

We identified 6 triggers — specific situations that prompt users to move from passive observation into active intervention.

| Trigger | Definition |

|---|---|

| Idea Spark from Agent’s Work-in-Progress | Observing the agent’s intermediate outputs sparks new creative ideas the user hadn’t previously considered |

| Need for Early Outcome Visibility | The user wants to see or use the agent’s results sooner — to plan next steps or preview the output before completion |

| Readiness for Fine-grained Detailing | The current stage requires detailed refinement aligned with the user’s specific vision, prompting them to take over |

| Task Interpretation Mismatch | The agent pursues a valid but unintended interpretation, or the user realizes their original request was too abstract |

| Execution Quality Drop | The agent’s performance deteriorates in speed, output fidelity, or overall quality below an acceptable threshold |

| Emerging New Task for Agent | The user conceives a new task — a different direction or logical next step — while observing the agent’s current work |

Even under the same trigger, different users respond differently. We identified 4 enabling factors that explain why.

| Enabling Factor | Definition |

|---|---|

| Mental Model of Agent Capability | Whether the user has a clear sense of what the agent can and cannot do for the current task |

| Perceived Task Importance | How the user weighs the importance of their own parallel work relative to what the agent is doing |

| Preferred Intervention Modality | Whether the user finds it easier to express intent through words, direct manipulation, or is uncertain which to use |

| Expectation of Agent Response | Whether the user believes their intervention — verbal or behavioral — will actually lead to successful task completion |

These patterns, triggers, and enabling factors don’t operate in isolation — they form a dynamic system. We synthesized our findings into a decision model that describes how users navigate collaboration across six recurring interaction loops, from the moment the agent begins a task to the moment it ends.

Full delegation, continuous observation, concurrent engagement, directive guidance — users move between these states fluidly within a single turn, driven by shifting priorities, evolving confidence, and the nature of the work itself. This model accounts for all 214 observed turns without contradiction.

Users express fine-grained intent through action — demonstrating what they want, rather than describing it.

What you correct, what you leave alone, and what you value — concurrent actions surface the preferences that are hard to put into words.

By capturing how a user intervenes and what they care about, agents could learn to align with their working style over time — making future collaboration more personalized and delegation more confident.

For more detailed findings and design implications, see the full paper.

We release a dataset of 214 human-agent co-creative interaction turns with logged actions, triggers, and designer rationales to support future research in human-agent co-creative collaboration.

Coming soon — the dataset is currently being prepared for public release.

@article{son2026when,

title={"When to Hand Off, When to Work Together": Expanding Human-Agent Co-Creative Collaboration through Concurrent Interaction},

author={Kihoon Son and Hyewon Lee and DaEun Choi and Yoonsu Kim and Tae Soo Kim and Yoonjoo Lee and John Joon Young Chung and HyunJoon Jung and Juho Kim},

year={2026},

eprint={2603.02050},

archivePrefix={arXiv},

primaryClass={cs.HC}

}

This research was conducted at KIXLAB, KAIST.